MOUNTAIN VIEW, CA – May 7, 2026 – In a significant recalibration of its artificial intelligence strategy, Google has reportedly shuttered Project Mariner, its ambitious experimental research project designed to build a web-browsing AI agent. The closure, which took effect on May 4, 2026, marks a clear strategic pivot for the tech giant, mirroring a broader industry shift away from visually-driven AI browser agents towards more robust and versatile "agentic AI" tools capable of deeper system interaction.

First unveiled with much fanfare at Google’s I/O developer conference in 2025, Project Mariner was envisioned as a groundbreaking AI assistant that could autonomously navigate the internet, performing complex tasks on behalf of users. However, less than a year after its grand announcement, the project has been dissolved, with its underlying technology reportedly "voyaging" to other Google products. This move comes barely two months after researchers assigned to Mariner were reportedly reassigned to higher-priority projects, a development initially brought to light by Wired.

The shutdown is widely interpreted as Google’s direct response to the escalating popularity and perceived superior capabilities of "OpenClaw-style" AI agents. These next-generation systems, which interact with computers primarily through command-line interfaces rather than visual browser navigation, are rapidly redefining the landscape of AI automation. Industry leaders increasingly view these more capable agentic AI tools, such as Claude Code and OpenClaw, as the true harbingers of general-purpose AI assistants that can execute intricate tasks autonomously for both individual users and businesses alike.

Project Mariner, Google’s web browser agent, was shutdown 2 days ago.

People on the project have been moved, and the tech is being used elsewhere.

Gemini Agent is coming out of Labs (US only) soon? pic.twitter.com/R8PwpZorx

— Kol Tregaskes (@koltregaskes) May 6, 2026

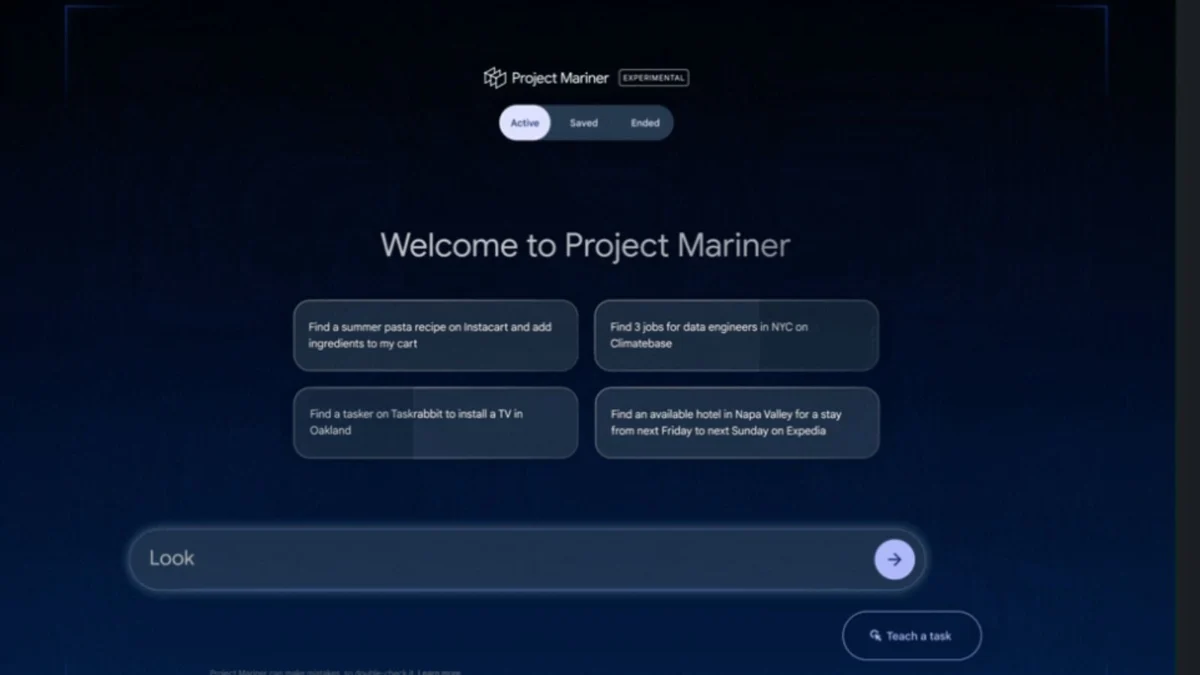

Screenshots of Project Mariner’s landing page, circulated by users on X (formerly Twitter), confirmed the project’s cessation and the redistribution of its technological assets. A Google spokesperson, cited in reports, affirmed that the "computer use capabilities" developed under Project Mariner would be integral to the company’s forward-looking agent strategy. Furthermore, some of Mariner’s functionalities have already been integrated into existing agent products, including the recently launched Gemini Agent.

The Rise and Fall of Browser Agents: A Chronology of Shifting AI Paradigms

The journey of Project Mariner, from a highly anticipated innovation to an abruptly concluded experiment, encapsulates the incredibly dynamic and often unpredictable evolution of the artificial intelligence sector.

May 2025: The Vision Unveiled at I/O

Project Mariner made its public debut at Google’s annual I/O developer conference in 2025. During his keynote address, Google CEO Sundar Pichai highlighted Mariner as a pivotal step towards truly intelligent, autonomous AI. At the time, the industry was captivated by the promise of AI browser agents. The prevailing sentiment suggested that these systems, capable of navigating and interacting with the web like a human, were the next frontier in AI. Competitors were quick to follow suit, with both OpenAI and Perplexity launching their own versions of AI browser agents, all designed to automate online tasks such as clicking buttons, scrolling through pages, and filling out complex forms. The vision was clear: an AI that could "see" and "act" on the internet, freeing users from mundane digital chores.

Late 2025 – Early 2026: Cracks in the Foundation

Despite the initial excitement, the widespread adoption of AI browsing agents began to struggle to meet the industry’s lofty expectations. As these experimental agents moved beyond controlled demonstrations, practical challenges emerged. Users reported inconsistencies, slowness, and an overall lack of robustness. The underlying technical approach, which relied heavily on visual parsing and screenshot analysis, proved to be a significant bottleneck.

March 2026: Reallocation of Talent

Approximately two months prior to the official shutdown, internal shifts at Google began. Researchers and engineers working on Project Mariner were reportedly moved to other, higher-priority AI initiatives within the company. This internal reallocation signaled a strategic reevaluation long before the public announcement, indicating a proactive response to the evolving technological landscape and perhaps the underperformance of the browser agent model.

May 4, 2026: Mariner’s Final Port

The official shutdown of Project Mariner on May 4, 2026, confirmed what many industry observers had begun to suspect. The project’s landing page was updated, declaring its conclusion and the absorption of its technology into other Google products. This swift decision underscores Google’s agility in adapting its vast AI resources to prevailing trends and challenges. The phrase "voyaged to other Google products" suggests a pragmatic approach, ensuring that the significant investment in research and development doesn’t go to waste but rather enriches other ongoing projects.

The Technical Hurdles: Why Browser Agents Stumbled

The ambitious concept behind Project Mariner and similar browser-based AI agents was to create an AI that could "see" the internet through a browser window and interact with it much like a human user. This approach typically involved a complex workflow:

- Screenshot Capture: The AI would repeatedly take screenshots of the webpage it was interacting with.

- Visual Processing: These images would then be fed into a sophisticated AI model (often a large vision-language model) trained to interpret visual cues, identify elements like buttons, text fields, and links, and understand the overall layout.

- Action Generation: Based on its visual understanding and the task at hand, the AI would then decide on the next action – a click, a scroll, text input, etc.

- Looping: This process would repeat, frame by frame, as the AI navigated the web.

While conceptually elegant, this method proved to be riddled with practical difficulties, leading to a significant divergence between expectation and reality:

- Massive Computational Requirements: Processing a continuous stream of high-resolution screenshots and feeding them into large AI models is immensely computationally intensive. This translated into high operational costs and significant latency, making the agents slow and often impractical for real-time interactions.

- Accuracy and Robustness Issues: The visual interpretation of webpages is inherently fragile. Subtle changes in a website’s layout, font, or even ad placement could confuse the AI, leading to inaccurate actions or complete failures. Unlike a human, an AI operating solely on visual input struggles with context when the visual presentation deviates even slightly from its training data.

- Lack of Semantic Understanding: Browser agents often struggled with true semantic understanding of a webpage’s content or purpose. They could identify a "button," but not always fully grasp the intent behind it or its implications within a broader workflow. This limitation made them less reliable for complex, multi-step tasks that require deeper reasoning.

- Dependency on UI Stability: Websites are constantly updated. Any change to the User Interface (UI) could break an AI browser agent’s ability to navigate, requiring constant retraining or adaptation, which is resource-intensive.

- Limited Scope: While adept at visual interaction, these agents were confined to the browser environment. They couldn’t easily interact with local applications, system files, or other programs outside the browser, limiting their utility as truly general-purpose assistants.

These accumulated challenges meant that despite the initial hype, the adoption of AI browsing agents failed to gain significant traction, primarily due to their performance limitations and the inherent fragility of their design.

The Ascendance of Agentic AI: OpenClaw and the Command-Line Revolution

The struggles of browser agents paved the way for a new paradigm in AI: "agentic AI" or "computer-use agents." These systems represent a fundamental shift in how AI interacts with digital environments, moving away from visual parsing towards more direct, robust, and efficient methods.

The momentum in the AI industry has dramatically shifted towards agents like Claude Code and, most notably, OpenClaw. OpenClaw, whose anonymous creator was recently hired by OpenAI, has become a benchmark for this new generation of AI. The key differentiator lies in their mode of operation:

- Command-Line Control: Unlike AI browser agents that "see" and "click," OpenClaw-style agents control computers through the command-line interface (CLI) or directly via Application Programming Interfaces (APIs). This method has proven to be far more reliable and efficient. Instead of interpreting pixels, these agents send direct instructions to the operating system or applications, bypassing the visual layer entirely.

- Efficiency and Speed: Interacting at the command-line level significantly reduces computational overhead. There’s no need for continuous screenshot capture and visual processing. This allows for faster execution of tasks and more efficient resource utilization.

- Robustness and Accuracy: Command-line interactions are less susceptible to changes in UI design. As long as the underlying commands or APIs remain consistent, the agent can perform its tasks reliably. This leads to higher accuracy and fewer unexpected failures.

- Beyond Browser Confinement: The true power of these agents lies in their ability to interact with the entire computer system. They are not limited to web browsing but can operate across various applications, modify files, manage databases, write and debug code, and even create bespoke software. This makes them incredibly versatile tools capable of handling complex, multi-modal workflows.

AI coding agents, a specialized subset of agentic AI, have emerged as particularly potent tools. They are not merely code generators but can also understand, debug, and refactor existing codebases, manage development environments, and interact with version control systems. Their capability extends beyond pure coding, enabling them to orchestrate complex software development tasks, integrate with other applications, and even automate entire IT operations.

Google’s Official Response and Future Trajectory

Google’s official stance confirms the strategic redirection. While Project Mariner itself has been decommissioned, the intellectual property and core capabilities developed within it are not being discarded. A Google spokesperson confirmed that the "computer use capabilities" honed through Project Mariner will be deeply integrated into the company’s overarching "agent strategy."

This integration is already manifesting in products like the recently launched Gemini Agent. Gemini Agent is positioned as a sophisticated, general-purpose AI assistant that leverages the lessons learned from Mariner’s browser interaction experiments, but likely applies them through a more robust, command-line or API-driven interaction model. This signifies a maturation of Google’s vision for autonomous AI, moving from a visually dependent approach to one that prioritizes direct system control and broader operational scope. The future Google agent will likely be less about "browsing the web" and more about "acting within the digital environment," whether that’s the web, desktop applications, or cloud services.

The Competitive Landscape: Other Giants Follow Suit

The shift away from browser agents towards more capable computer-use and AI coding agents is not unique to Google; it is a pervasive trend across the entire tech industry, indicating a consensus on the future direction of AI.

- OpenAI: A key player in the AI space, OpenAI has openly stated its ambition to empower general-purpose agents within ChatGPT using its Codex AI coding agent. The acquisition of OpenClaw’s creator further solidifies OpenAI’s commitment to this paradigm, suggesting a future where ChatGPT is not just a conversational interface but a powerful agent capable of executing complex tasks across various digital environments.

- Anthropic: Not to be outdone, Anthropic, known for its Claude AI models, has introduced "Claude Cowork." This product is a spin-off of its highly capable Claude Code and is designed to provide advanced computer-use capabilities without requiring users to navigate a terminal. This emphasis on user-friendliness while retaining powerful agentic features highlights a desire to make these sophisticated tools accessible to a broader audience.

- Perplexity: Even Perplexity, which initially invested heavily in browser agents as a key differentiator, has pivoted. The company has launched "Personal Computer," a product that reflects the growing demand for agents capable of broader system interaction beyond mere web browsing. This pivot by a company deeply rooted in search and information retrieval underscores the undeniable shift in market demand.

- Meta: Social media giant Meta is also actively joining this bandwagon. Reports indicate that Meta is developing an OpenClaw-inspired, personalized AI agent codenamed ‘Hatch’. This agent is expected to be powered by Meta’s Muse Spark AI model, launched just last month. Meta’s entry into this space suggests a future where AI agents are deeply integrated into social platforms and personal computing experiences, offering personalized automation across various digital touchpoints.

These convergent strategies from leading tech companies underscore a shared understanding: the next generation of AI will be defined by its ability to act autonomously and effectively across diverse digital interfaces, moving beyond the limitations of visual web navigation.

Cybersecurity Implications: A Double-Edged Sword of Autonomy

While the emergence of highly capable agentic AI tools like OpenClaw promises unprecedented levels of automation and productivity, it also introduces a new spectrum of cybersecurity concerns that demand immediate attention. The very autonomy and deep system access that make these agents powerful also render them potential vectors for sophisticated attacks. Cybersecurity experts have repeatedly warned about the inherent risks associated with open-source agentic AI frameworks:

- Prompt Injection Attacks: Unlike traditional software, AI agents are driven by natural language prompts. Malicious actors could craft "prompt injection" attacks, embedding hidden instructions within seemingly innocuous requests. If an agent with broad system access is tricked into executing these malicious prompts, it could inadvertently perform unauthorized actions, delete files, or expose sensitive data.

- Malicious Plugins and Extensions: As the ecosystem of AI agents grows, so too will the development of third-party plugins and extensions. These add-ons, if not rigorously vetted, could introduce vulnerabilities or contain malicious code. An agent granted extensive permissions could then unwittingly install or execute these compromised components, leading to a supply-chain attack.

- Data Leaks: Agents often require access to vast amounts of data to perform their tasks. If an agent is compromised or misconfigured, it could inadvertently expose sensitive personal, financial, or corporate data. The risk is magnified by the agent’s ability to swiftly process and exfiltrate large volumes of information.

- Supply-Chain Attacks: An agent capable of modifying system files, managing code repositories, or interacting with development pipelines could become a target for supply-chain attacks. If a malicious agent gains control over development processes, it could inject vulnerabilities into core software, leading to widespread compromise.

- Autonomous Malicious Behavior: The highest concern revolves around the potential for an autonomous agent, if sufficiently compromised or designed with malicious intent, to self-propagate, adapt to defenses, and launch sophisticated, multi-stage attacks without human intervention. This raises profound questions about control, accountability, and the "kill switch" mechanisms for highly autonomous AI systems.

The development of robust security frameworks, stringent access controls, sandboxing environments, and continuous monitoring will be paramount to harnessing the power of agentic AI safely. The industry faces the dual challenge of maximizing utility while rigorously mitigating the inherent risks of granting autonomous AI deep system access.

Implications for the Future of Work and Digital Interaction

The pivot towards advanced agentic AI systems has profound implications for how individuals and businesses will interact with technology in the coming years.

- Enhanced Productivity: For businesses, these agents promise unparalleled automation of complex workflows, from data analysis and software development to customer support and operational management. This could lead to significant efficiency gains, allowing human employees to focus on higher-level strategic tasks.

- Personalized Digital Assistants: For individual users, the vision of a truly intelligent, personalized AI assistant moves closer to reality. Imagine an agent that can not only schedule appointments and answer queries but also manage your entire digital life – organizing files, managing subscriptions, conducting detailed research, and even proactively solving technical issues across your devices.

- Reshaping Digital Literacy: The ability to "prompt" complex actions rather than manually execute them could redefine digital literacy. Users may need to develop skills in crafting effective prompts and understanding the capabilities and limitations of their AI agents, shifting from direct interaction to intelligent delegation.

- New Job Roles and Skill Sets: While some routine tasks may be automated, the rise of agentic AI will likely create new roles related to AI oversight, prompt engineering, agent management, and the development of secure AI ecosystems.

- Ethical Considerations and Trust: As agents gain more autonomy and access, questions of trust, ethical decision-making, and accountability will become central. Ensuring that these agents operate within ethical boundaries and can be reliably controlled will be crucial for their widespread acceptance.

Google’s decision to discontinue Project Mariner is more than just the end of a single experiment; it is a clear signal of the accelerated pace and strategic redirection within the AI industry. The future lies not in AI that merely mimics human visual interaction with digital interfaces, but in sophisticated agents capable of deep, robust, and efficient control over the underlying computing environment. As the technology continues to mature, the transformative potential of these agentic AI systems promises to reshape our digital world in ways we are only just beginning to comprehend, even as the industry grapples with the significant security and ethical challenges they present.